What is LangSmith and How Does It Work?

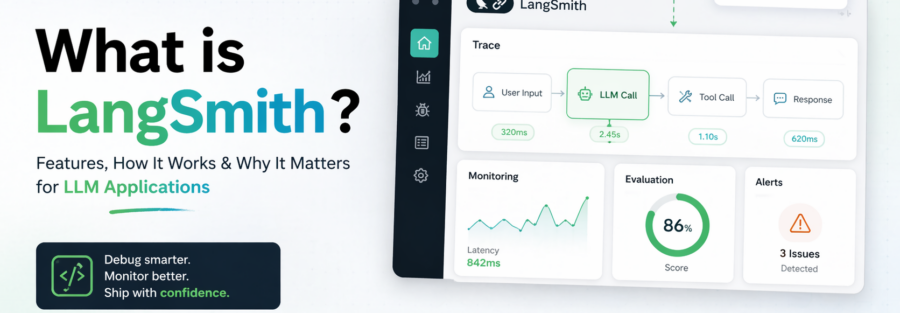

Building an LLM application is surprisingly straightforward. Making it reliable, debuggable, and production-ready — that’s an entirely different challenge. LangSmith was created to bridge exactly that gap.

The world of AI development has exploded. Thousands of teams are now shipping chatbots, AI agents, RAG pipelines, and autonomous systems powered by large language models. But unlike traditional software, LLM apps are probabilistic — they don’t behave consistently, their failure modes are opaque, and tracing a bug inside a neural network is nearly impossible with conventional tools.

Enter LangSmith — a purpose-built platform from the creators of LangChain that gives developers complete visibility into every step an LLM takes. Think of it as Datadog or New Relic, but designed from the ground up for the unique challenges of AI applications.

What Exactly Is LangSmith?

LangSmith is a unified, framework-agnostic platform for debugging, testing, evaluating, and monitoring applications built with large language models. Launched in closed beta in August 2023 by LangChain, Inc. — the team behind the wildly popular open-source LangChain framework — it reached general availability with paid plans starting in July 2024.

It is important to understand what LangSmith is not. It is not a model provider (it doesn’t generate AI responses). It is not a development framework like LangChain itself. LangSmith is the infrastructure layer — the operational backbone that makes LLM applications observable, testable, and reliable once you’ve built them.

LangSmith vs. LangChain: LangChain helps you build LLM workflows using modular components like chains, agents, and memory. LangSmith helps you ensure those workflows run smoothly by providing monitoring, debugging, and evaluation tools in a unified interface.

Critically, LangSmith is framework-agnostic. Even if you’re not using LangChain at all, you can integrate LangSmith into pipelines built with the OpenAI SDK, Anthropic SDK, Vercel AI SDK, LlamaIndex, or any custom implementation via Python, TypeScript, Go, or Java SDKs.

The Problem LangSmith Solves

Traditional software is deterministic. If something breaks, you add a log statement, set a breakpoint, and trace the issue. LLMs are fundamentally different — their outputs are probabilistic, their reasoning paths are often opaque, and failure modes include subtle hallucinations, tool-use errors, infinite loops, and context mismanagement that only appear under specific real-world conditions.

When an LLM application misbehaves in production, developers are left asking: Was it a bad prompt? A poor retrieval result from the vector database? A tool that returned unexpected data? Without visibility into every step, debugging becomes guesswork. This is the observability crisis that LangSmith was built to solve.

The core challenge: LLM applications involve complex reasoning paths, dynamic tool usage, and multi-step chains. When errors occur — such as incorrect outputs or tool invocation failures — traditional debugging methods fall short. LangSmith provides detailed, sequential visibility into each interaction with LLMs.

Core Features of LangSmith

LangSmith is organized around four core capabilities that together cover the full LLM application lifecycle:

Capture every step of an LLM run — from input to output — including tool calls, chain steps, memory reads, and token usage.

Real-time dashboards for latency, cost, error rates, token consumption, and custom quality metrics.

Build datasets from production traces, run automated evaluations, and score model outputs with human review or AI-powered graders.

Deploy agents as managed Agent Servers with versioning, rollbacks, and support for A2A, MCP, and Agent Protocol standards.

LangSmith vs. Alternatives

LangSmith isn’t the only player in the LLM observability space. Here’s how it compares to other common tools:

| Tool | Best For | LangSmith Advantage |

|---|---|---|

| Helicone | Simple proxy-based LLM logging | Deeper tracing, evaluation, and deployment features |

| Weights & Biases | ML experiment tracking & model training | Purpose-built for production LLM app observability |

| Datadog / New Relic | General application monitoring | LLM-native concepts: token costs, prompt versions, agent steps |

| Arize AI | ML monitoring & data quality | Tighter integration with LangChain ecosystem & agent workflows |

| Galileo AI | LLM hallucination detection | End-to-end platform: trace → eval → deploy in one place |

Real-World Use Cases

Customer Support AI Agents

Companies like Klarna have used LangSmith to build AI-powered customer support systems that handle thousands of interactions per day. LangSmith’s tracing helped identify where agents were failing to resolve issues, leading to an 80% improvement in customer resolution times. Teams can monitor live sessions, annotate failures, and build targeted evaluation datasets to keep quality high as agent behavior evolves.

RAG (Retrieval-Augmented Generation) Pipelines

RAG applications combine vector database retrieval with LLM generation. Failures can occur at either stage — a retrieved chunk might be irrelevant, or the LLM might ignore good context. LangSmith traces capture both stages, making it easy to distinguish retrieval failures from generation failures and fix the right layer.

Autonomous Multi-Agent Systems

As agent architectures become more complex — with one LLM orchestrating other specialized agents — the need for traceability explodes. LangSmith treats sub-agent delegation, conversation threads, tool calls, and memory as first-class concepts, giving teams a coherent view of a multi-agent run that would otherwise be impossible to debug.

Business Process Automation

C.H. Robinson used LangSmith to build an AI system that automated 5,500 orders per day, saving over 600 hours of manual work daily. For mission-critical workflows like this, the monitoring and alerting features of LangSmith are essential to catching regressions before they impact business operations.

Deployment & Security Options

LangSmith is available in three deployment models to match different infrastructure and compliance needs:

Hosted at smith.langchain.com. Data stored in GCP us-central-1. Easiest to get started, with a generous free tier.

Deploy LangSmith on your own Kubernetes cluster in AWS, GCP, or Azure. Data never leaves your environment.

Bring-your-own-cloud for teams with specific data residency requirements. Combines managed control plane with your own data plane.

LangSmith maintains SOC 2 Type 2, HIPAA, and GDPR compliance, making it suitable for regulated industries. Importantly, LangChain does not train on your data — you retain all rights to the traces and data flowing through the platform.

The Bottom Line

As LLM applications move from prototype to production, the gap between “works in a demo” and “works reliably for real users” has become one of the defining challenges in AI engineering. LangSmith fills that gap by making AI applications observable, testable, and continuously improvable.

Whether you’re building a simple chatbot or a complex multi-agent system, LangSmith provides the infrastructure to understand what your AI is doing, catch problems before users do, and systematically improve performance over time. With a free tier available and integrations across virtually every major LLM framework, it’s one of the most accessible and powerful tools in any AI developer’s toolkit in 2026.

If you’re building with LLMs and haven’t explored LangSmith yet, there’s never been a better time to start.