What is Galileo AI? The Evaluation Intelligence Platform for Production GenAI

Deploying AI without evaluation is like shipping code without tests. Galileo AI was built to close that gap — bringing rigorous, automated evaluation to every stage of the GenAI lifecycle.

The big picture: what is Galileo AI?

Galileo AI is an Evaluation Intelligence Platform for generative AI applications and agents. Based in San Francisco, it was founded by former engineers from Google AI, Google Brain, and Uber AI — people who lived the pain of evaluating large AI systems at scale and decided to build the solution.

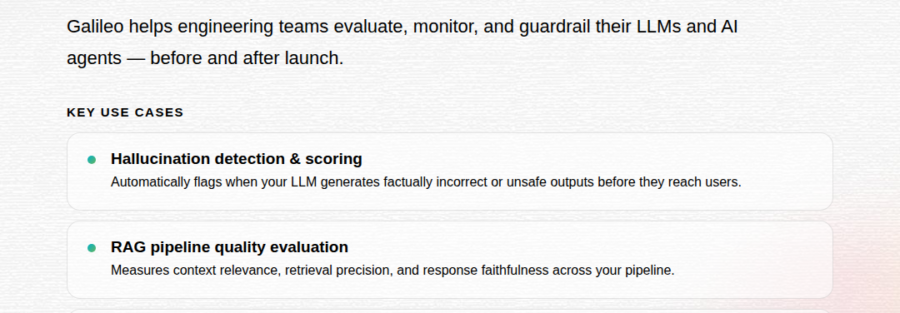

Unlike generic monitoring tools, Galileo is purpose-built for the unique challenges of GenAI: hallucinations, tool-use failures, RAG retrieval errors, and the unpredictable behavior of multi-step AI agents. The platform covers the full AI lifecycle — from development and testing through deployment and real-time production monitoring.

Three core modules, one platform

Galileo’s platform is organized around three pillars that together cover the entire AI deployment lifecycle:

The secret engine: Luna-2 models

What makes Galileo practically different from competitors is its Luna-2 evaluation models — lightweight, fine-tuned versions of Llama (3B and 8B parameter variants) built specifically for AI evaluation tasks.

Why Luna-2 changes the math

Traditional LLM-as-judge evaluation using GPT-class models is expensive and slow. Luna-2 distills that intelligence into compact models that deliver comparable accuracy at a fraction of the cost — making 100% production traffic monitoring economically viable for the first time.

Key use cases Trending in 2026

Who is Galileo AI built for?

Galileo serves ML engineers, AI product teams, and enterprise AI leaders who are shipping GenAI to real users and need more than vibe-checks to know if it’s working. Customers span from fast-growing startups to some of the world’s largest companies:

The platform integrates with the tools enterprise teams already use — Google Cloud, Vertex AI, BigQuery — and is available as SaaS, Virtual Private Cloud, or fully on-premises for regulated industries.

Galileo AI vs. Arize AI: what’s the difference?

Both platforms tackle AI observability, but they come at it differently. Arize has a longer track record in traditional ML monitoring and more transparent public pricing, making it approachable for teams with diverse model types. Galileo’s competitive edge is its GenAI-first design: the Luna-2 guardrail models, purpose-built hallucination scoring, and agent-level tracing are native — not retrofitted from an ML monitoring tool.

For teams primarily building LLM apps, RAG systems, or AI agents, Galileo’s evaluation depth is hard to match. For teams with a mixed ML and GenAI portfolio, Arize may offer broader coverage. In 2026, many enterprise teams are evaluating both.

Should your team use Galileo AI?

If you’re running GenAI in production — or planning to — Galileo is worth evaluating seriously. The free tier lets developers get hands-on with the platform without a sales conversation. For teams that have already experienced silent AI failures, hallucination incidents, or agent misfires in production, the Luna-2 cost model makes monitoring 100% of traffic financially realistic for the first time.

Where Galileo may not be the right fit: very early-stage teams with no live AI system yet, or organizations needing fully transparent per-seat pricing upfront. The sales-led pricing model for paid plans can slow down procurement cycles at smaller companies.

Bottom line

Galileo AI is setting the standard for what AI evaluation should look like: automated, continuous, cost-effective, and built on purpose-trained models — not expensive general-purpose LLMs. As enterprises push GenAI deeper into critical workflows in 2026, platforms like Galileo are moving from “good to have” to non-negotiable engineering infrastructure.