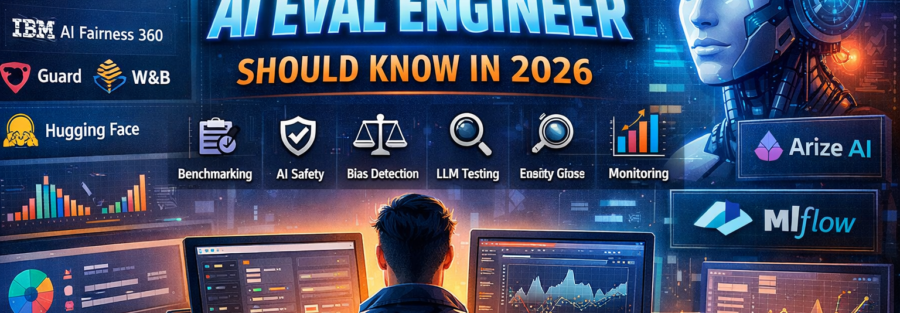

Tools Every AI Eval Engineer Should Know in 2026 (With Real Platforms)

AI evaluation in 2026 is no longer theoretical. It requires hands-on experience with specialized platforms built for benchmarking, monitoring, hallucination detection, bias analysis, safety testing, and production observability.

Modern AI systems are no longer single models. They include:

- Large Language Models (LLMs)

- RAG pipelines

- AI agents

- Multi-step reasoning workflows

- Distributed inference systems

And increasingly:

- Multi-agent orchestration frameworks

- Tool-using autonomous systems

- Memory-driven AI architectures

Because of this complexity, AI evaluation tools now fall into seven major categories:

- Testing

- Evaluation Frameworks & Experimentation

- Agent & Orchestration Frameworks

- Observability

- Production Monitoring

- Bias & Responsible AI

- Safety & Adversarial Testing

Let’s break down the real platforms dominating each category in 2026.

Testing Tools for LLM & AI Systems

Testing generative AI is fundamentally different from traditional software testing. Outputs are probabilistic, not deterministic. You measure quality, not just correctness.

1. OpenAI Evals

An open-source benchmarking framework for large language models.

Why it matters:

- Create custom evaluation datasets

- Run automated regression tests

- Compare different model versions

- Detect hallucinations and instruction failures

It is widely used for structured benchmarking of GPT-style models.

2. DeepEval

A dedicated LLM evaluation framework designed for automated quality scoring.

Key strengths:

- Faithfulness scoring

- Answer relevance evaluation

- Custom evaluation metrics

- Automated test case execution

DeepEval helps engineers treat LLM outputs like unit-testable components.

3. Ragas

Purpose-built for evaluating Retrieval-Augmented Generation (RAG) systems.

Core metrics include:

- Context precision

- Context recall

- Faithfulness

- Answer correctness

If you’re building search-powered AI applications, Ragas is essential.

Evaluation Frameworks & Experiment Platforms

Testing outputs is not enough. AI teams need structured experiment tracking, dataset management, and version comparison.

1. LangSmith

Built for LLM applications and AI agents.

Key features:

- Prompt version tracking

- Trace-level debugging

- Dataset-driven evaluation

- Agent workflow inspection

Critical for teams building multi-step chains and AI agents.

2. Braintrust

A modern experimentation and evaluation platform.

Why it stands out:

- Evaluation dataset management

- Model comparison dashboards

- Human-in-the-loop + automated scoring

- Prompt iteration tracking

Braintrust enables structured, scalable evaluation pipelines.

3. Weights & Biases

Originally built for ML experiment tracking, now heavily used for LLM evaluation.

Used for:

- Experiment tracking

- Model comparison dashboards

- Metric visualization

- Integration with PyTorch, TensorFlow, Hugging Face

Ensures reproducibility and structured experimentation.

4. MLflow

A lifecycle management platform for ML systems.

Tracks:

- Model versions

- Parameters

- Evaluation metrics

- Deployment stages

Essential for CI/CD-driven AI workflows.

Agent & Orchestration Frameworks (New Critical Layer in 2026)

In 2026, AI systems are increasingly agent-based. Evaluation engineers must understand how agents reason, coordinate, call tools, and manage memory.

Here are the key platforms shaping agentic AI:

1. CrewAI

A multi-agent orchestration framework focused on role-based autonomous agents.

Why it matters:

- Multi-agent collaboration workflows

- Task delegation between agents

- Structured role-based execution

- Enterprise automation

Eval engineers test:

- Agent coordination quality

- Task success rate

- Tool usage correctness

- Failure recovery behavior

2. LangGraph (by LangChain)

A graph-based agent orchestration system built for complex workflows.

Key advantages:

- Stateful agent execution

- Branching reasoning paths

- Deterministic + agentic hybrid flows

- Deep integration with LangChain ecosystem

Critical for evaluating multi-step reasoning agents and dynamic decision trees.

3. AutoGen (by Microsoft)

Designed for multi-agent conversational collaboration.

Why it’s important:

- Agent-to-agent conversation modeling

- Tool-using AI systems

- Autonomous task solving

- Research-grade agent simulations

AI Eval Engineers must evaluate:

- Conversation stability

- Task decomposition accuracy

- Long-horizon reasoning quality

4. DSPy

A declarative framework for optimizing LLM pipelines.

What makes it unique:

- Programmatic prompt optimization

- Automatic metric-based tuning

- Declarative LLM programming

It changes evaluation from manual tuning to optimization-driven experimentation.

5. LlamaIndex

A powerful data framework for building RAG and memory-based systems.

Why it matters:

- Data ingestion pipelines

- Indexing and retrieval evaluation

- Structured memory systems

- Tool-augmented generation

Eval engineers use it to measure:

- Retrieval quality

- Context relevance

- Memory consistency

- Data grounding reliability

Agent frameworks are now part of the evaluation surface area. Testing only outputs is not enough. You must test reasoning chains, tool calls, memory states, and coordination logic.

Observability for AI Pipelines

Observability answers the most important debugging question:

Why did the model behave this way?

Modern AI systems are distributed across APIs, vector databases, embeddings, and inference endpoints.

1. OpenTelemetry

A standardized tracing and metrics framework.

Why it matters:

- Distributed tracing

- Latency tracking

- Infrastructure visibility

- Integration with Grafana, Datadog, cloud stacks

OpenTelemetry connects complex AI pipelines into a single trace.

2. Hugging Face & Open LLM Leaderboards

Provides:

- Standard benchmark datasets

- Model comparison leaderboards

- Evaluation pipelines

Used for:

- MMLU benchmarking

- Multilingual testing

- Reasoning evaluation

Production Monitoring & Reliability

Monitoring ensures models behave correctly after deployment.

LLM failures are subtle:

- Hallucinations

- Drift

- Bias

- Unsafe outputs

1. Arize AI

A leading AI observability platform.

Tracks:

- Output drift

- Embedding drift

- Hallucination rates

- Performance degradation

Critical for large-scale production AI systems.

2. Galileo

Specializes in LLM evaluation and hallucination detection.

Focus areas:

- Root cause analysis

- Prompt debugging

- Retrieval evaluation

- Hallucination detection

Especially powerful for RAG systems.

3. WhyLabs

Focused on:

- Data drift detection

- Anomaly detection

- AI system reliability

Useful for maintaining stable LLM pipelines.

Bias, Fairness & Responsible AI

AI evaluation must include fairness and compliance checks, especially in regulated industries.

1. AI Fairness 360

A comprehensive fairness toolkit.

Helps:

- Detect demographic bias

- Apply mitigation algorithms

- Generate fairness reports

Essential in healthcare, finance, and government applications.

2. Responsible AI Toolbox

Evaluates:

- Fairness

- Explainability

- Error analysis

- Causal insights

Strong for enterprise-grade AI systems.

AI Safety & Adversarial Testing

Public-facing AI systems must be tested against attacks.

1. Lakera Guard

Focus areas:

- Prompt injection detection

- Jailbreak resistance

- Data leakage prevention

Highly relevant for AI products exposed to users.

2. Anthropic Safety Evaluations

Structured alignment and safety methodologies used for:

- Harmful content detection

- Model alignment evaluation

- Policy compliance testing

These approaches influence how modern AI safety pipelines are designed.

The Modern AI Eval Stack in 2026

A real-world AI evaluation stack typically looks like this:

Testing

OpenAI Evals, DeepEval, Ragas

Frameworks & Experimentation

LangSmith, Braintrust, Weights & Biases, MLflow

Agent & Orchestration

CrewAI, LangGraph, AutoGen, DSPy, LlamaIndex

Observability

OpenTelemetry, Hugging Face Benchmarks

Monitoring

Arize AI, Galileo, WhyLabs

Bias & Responsible AI

AI Fairness 360, Responsible AI Toolbox

Safety & Adversarial Testing

Lakera Guard, Anthropic Safety Evaluations

Final Thoughts

In 2026, AI evaluation is not just about accuracy.

It is about:

- Reliability

- Safety

- Bias detection

- Hallucination monitoring

- Real-world robustness

- Continuous production tracking

- Agent stability

- Tool usage validation

- Long-horizon reasoning integrity

AI evaluation is now:

- Continuous

- Automated

- Integrated into CI/CD

- Safety-aware

- Observability-driven

- Agent-centric

If you want to become a successful AI Eval Engineer, mastering platforms like CrewAI, LangGraph, AutoGen, DSPy, LlamaIndex, OpenAI Evals, LangSmith, Arize AI, Braintrust, and DeepEval is just as important as understanding machine learning theory.

Because in 2026:

Building AI is easy.

Evaluating it correctly is what makes you valuable.